Large organisations have always struggled with having an agreed, corporate-wide set of data for things that cross organisational boundaries: customers, products, locations, assets etc. A dedicated multi-billion-dollar software market, master data management (MDM), grew up to address this issue. Why is it so hard to get a single list of customers or products? The average corporation has several hundred different applications, according to estimates based on several surveys. Data domains like “customer” and “product” (examples of “master data”) tend to proliferate across many applications, since a lot of applications in a company involve customers: marketing to customers, selling to customers, billing customers, supporting customers, analysing customers etc.

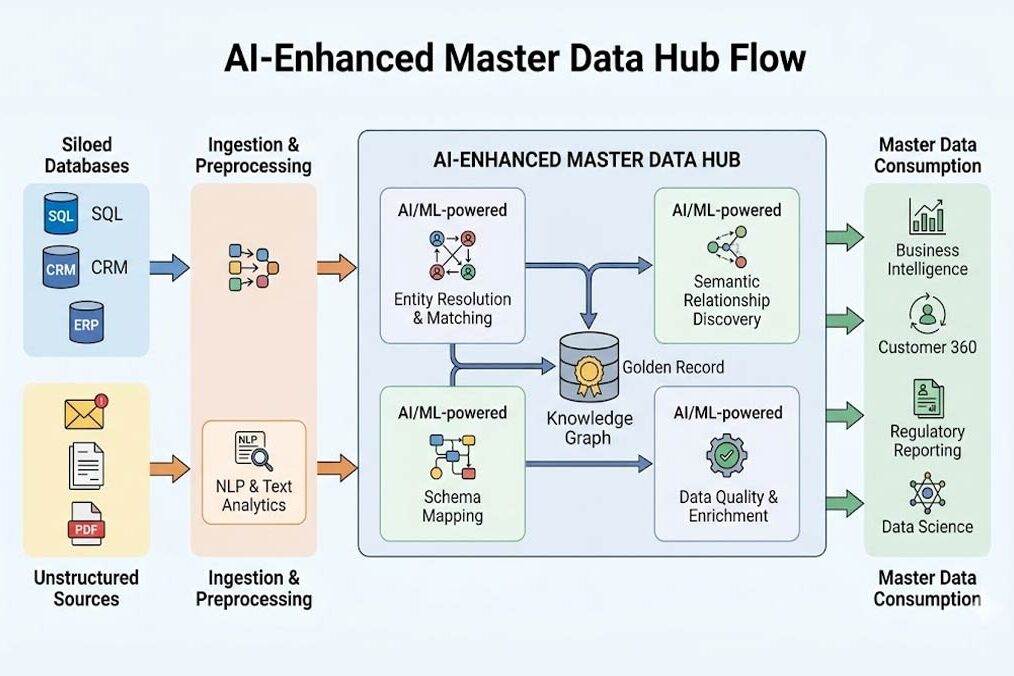

While exact estimates vary, maybe a fifth of applications contain customer data. Moreover, many of these applications are packaged applications from vendors (such as ERP or CRM) or external applications like ecommerce platforms, billing systems like Stripe, loyalty platforms etc. Every one of these systems will typically have its own customer codes, classifications etc, and so it is hard for a corporation to corral all these and impose a single, unified set of customer records across the enterprise. A specific customer record may appear in a CRM system, billing systems and support emails under slightly different names, and these need to be unified. Still, an industry of software packages is testament to the efforts made. MDM tools, along with the data quality tools that they often incorporate, identify duplicate records and merge them together into a single “golden” record: say, the details of a specific customer. They do this based on business rules such as “survivorship rules”. These specify that some systems are more trusted than others (maybe ERP is regarded as the most trusted), or have been updated more recently than others, or have more complete records than others, and such characteristics can be used to decide which records to use to contribute to a master record. The resulting golden records are stored in a master data hub. This hub can be used to populate downstream systems like data warehouses or analytic applications.

Enter AI, which changes the world of master data in more ways than one. AI tools like large language models (LLMs) are entirely dependent on data: their own training data, and the additional data they are exposed to. Techniques like retrieval augmented generation expose corporate data such as policies, product specifications and technical manuals to LLMs via a vector search. They have to do this since otherwise an LLM has no context with which to answer company-specific questions like “where is my order?” or “who are my most profitable customers”.

However master data management is typically all about structured data that resides in databases: a material master database, a corporate product database etc. Much corporate data is in unstructured form: text files, images like photos of products for ecommerce websites, CAD drawings, case notes etc. These are tougher to govern than structured fields within a database. Also, business users often need to not just see a master record, but understand where it came from and, if it was merged from several sources, be able to trace the transformations, when they happened and why.

Tracking the lineage of data across time and from multiple sources is frequently important. LLMs are very good at reading large amounts of unstructured data and spotting patterns within it, so can effectively help extend MDM from the structured data world to the unstructured. They can parse emails and text files to pull out entities, relationships and attributes. They can spot anomalies like mismatched address records between systems, and propose data quality rules from observed patterns in data. They can also compare structured data fields and probabilistically infer matches: if name, postcode and email address are the same in two different files then it probably refers to the same entity. Invoices can be parsed for product codes and linked back to master data product records.

In this way, AI can assist MDM by (at least partially) automating the classification and tagging of data, anomaly detection and data quality rule generation, helping with record matching and merging (machine learning has been used for this for some time), and helping to document data lineage. A natural language interface on top of a master data hub can help users find and interpret data, understand data lineage and the survivorship rules used to construct a single golden record from several different source systems. There are still challenges to deal with: LLMs regularly hallucinate, so they may hallucinate false entities from unstructured data, just as they regularly hallucinate fake case precedents in legal documents. A stage of human review is important rather than blithely assuming that LLMs can automate everything.

MDM technology can make use of AI in various ways to help construct better quality master data records, and can extend the scope of master data into the unstructured data world. In its turn, LLMs that are to be used to help in business processes, such as customer service chatbots accessing corporate ordering systems, can be more confidently used if they are basing their answers on trusted master data hubs, rather than having to dynamically interpret data from a range of systems with duplicate records. In this way AI can help improve the quality of the data on which it itself will depend, a virtuous circle. You may want to begin by reviewing the state of your own master data. Is it AI ready? Enterprises that carefully modernise their MDM with AI will improve data quality and also improve the reliability of their AI applications.