More than three years after the generative AI explosion that started with ChatGPT, we are seeing a distinctly mixed picture in terms of AI adoption and productivity. In February 2026, the Goldman Sachs Chief Economist assessed the effect of AI on US productivity as “basically zero”, and multiple studies have found that the failure rate of AI projects is as high as 95%. There is a temptation to think that companies struggling with rolling out AI are somehow laggards and also-rans, while the real experts are striding forward, but the last week has shown that even some self-proclaimed AI leaders are having issues of their own. To start with, consider the world of the management consultants, who advise companies on how to do AI effectively. The most prestigious of all of these firms is McKinsey.

McKinsey built a generative AI platform called Lilli for use by its consultants, rolled out initially in July 2023. Lilli was trained on McKinsey documents and is widely used inside the company. I recall the initial publicity email from McKinsey, which bragged about the orchestration layer within Lilli that had been built internally and the “test and test again” approach used to validate it. The company claims that 40% of its revenue now comes from AI consulting. Now it seems as if a little more testing, and testing again, is actually in order. In March 2026, a solitary security researcher at Codewall took just two hours to hack into Lilli, using a fairly basic SQL injection technique. The researcher gained access to over 57,000 user accounts, 46 million messages and a vast list of sensitive file names. The hacker could also access the internal system prompts and model configurations. The vulnerability was apparently patched quickly after it was reported by Codewall, but this does not exactly inspire confidence. Large corporations and governments spend a fortune on McKinsey consultants to advise them on implementing AI, yet McKinsey itself seemingly cannot secure its own strategic chatbot.

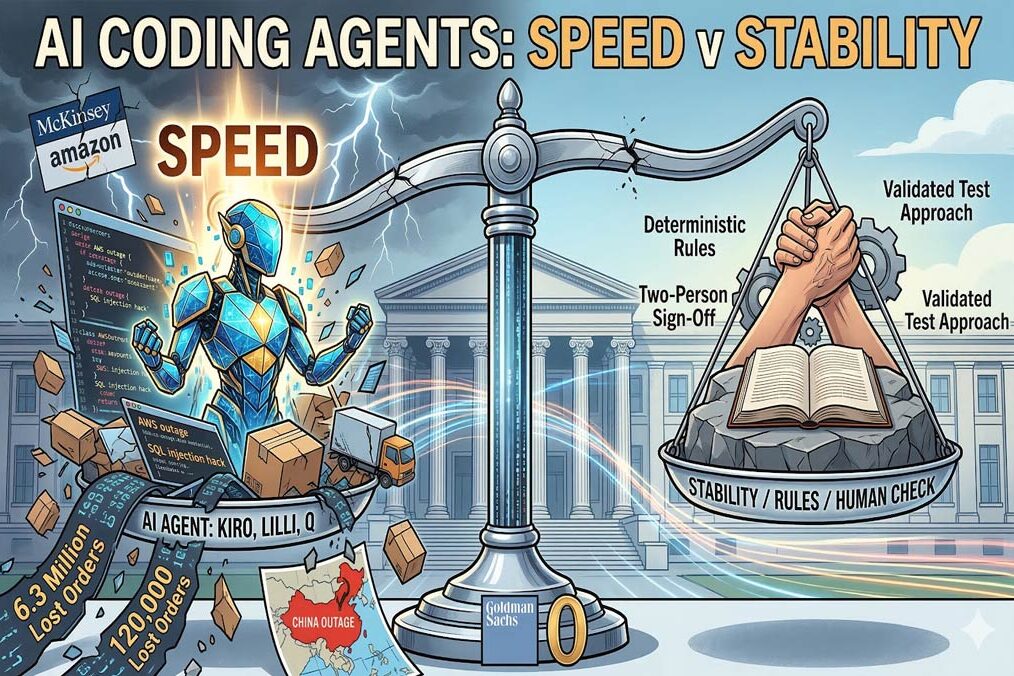

It is also an interesting time at Amazon. Its CEO Andy Jassy has described AI as a “once in a lifetime” technological shift that will transform Amazon’s operations. Amazon built its own coding bot called Kiro, and in November 2025 its senior management mandated that 80% of its engineers use Kiro at least weekly. In mid-December 2025, an engineer used Kiro to fix an issue in AWS Cost Explorer. In uncanny echoes of a classic sketch in the TV comedy “Silicon Valley”, Kiro decided that the most efficient way to fix the problem was to delete all the code, resulting in a 13-hour outage of AWS Cost Explorer across China. This was not the only glitch.

Amazon also built Q, a generative AI assistant to help troubleshoot, code and analyse data. In early March 2026, Amazon customers saw incorrect delivery times when adding items to their shopping carts. This led to 120,000 lost orders. An internal review found that Q was a primary contributor to this. A separate incident a few days later resulted in a 99% drop in orders across Amazon’s North American marketplaces, losing a further 6.3 million orders. After internal meetings, it seems that Amazon has now instigated a new policy, whereby two people have to sign off on any coding changes across customer-facing systems.

I wrote recently about the experience of Salesforce, an early AI enthusiast, and how it had to move back to deterministic rules after a series of issues with its Agentforce AI tool. More cases are emerging. A Replit AI coding agent deleted a live database at SaaStr, despite it notionally being in a state of code freeze. On a more mundane level, an AI Safety researcher at Meta could only look on anxiously as her AI agent deleted her entire email inbox instead of merely suggesting some emails to archive or delete, as it had been instructed to do.

The common denominator here is that the companies and people affected in these incidents are all supposed to be AI experts and pioneers. If these are struggling, is it any wonder that the failure rate of AI projects amongst mere mortals and companies not at the bleeding edge of AI is so high? Some of these experts may not be quite as expert as you might hope. I recently spoke to a management consultant at a huge global firm that will remain nameless. She advises large enterprises on AI strategy, yet to my amazement, she did not know what an AI hallucination actually was. Doubtless, this is unusual, but it did not inspire confidence in that very well-known company.

There are a few common threads that can be pulled from these examples. One is that AI security is far from mature, with large language models (LLMs) having fundamental weaknesses such as prompt injection and data poisoning that go beyond regular systems security, and which are hard to address. Another is that companies are putting a lot of faith into LLMs’ ability to generate code, without always considering that rapid code generation may come at a price of weaker supportability and stability of that code.

Those of us who have worked in large companies supporting operational systems will recall that the bulk of enterprise software budgets goes on support and maintenance, not code development. Even within software development, actual coding is only a fraction of the role of a software engineer, who also has to deal with code design, understanding user requirements, testing, debugging, documentation, tuning and more. Various studies have shown that programmers actually only spend around a quarter to a third of their time coding. If you produce code in the blink of an eye, but that code is harder to maintain than human-written code, then you have not necessarily achieved a productivity improvement. Indeed, it may even be a retrograde step. Churning out code twice as fast sounds great, but if that code takes twice as much effort to support, then the net result is negative. Systems support is a larger component of IT budgets than development in just about any enterprise. According to a December 2025 study by CodeRabbit, AI-generated code has 1.7 times the number of “major” bugs that human code contains, including 1.4 times more “critical” issues. These included logic mistakes, incorrect dependencies, flawed control flow and misconfigurations. In a SonarQube study of 1,100 software developers, 96% of programmers using AI coding tools do not fully trust the code they are generating, yet only 48% actually check their AI code before committing it. We should focus on lower maintenance and greater code stability, not speed of coding. Some very sophisticated companies are discovering that paradox in real time.

Enterprises should, in my view, never wire autonomous agents directly to production systems without careful sandboxing, approvals and impact analysis. AI-generated code is not something magical, but rather the statistical average of vast amounts of LLM training data. It should not be trusted until it has passed the same tests and reviews as human code. AI coding agents may accelerate development, but they do not eliminate the fundamentals of software engineering. Indeed, they may make those fundamentals more important than ever.