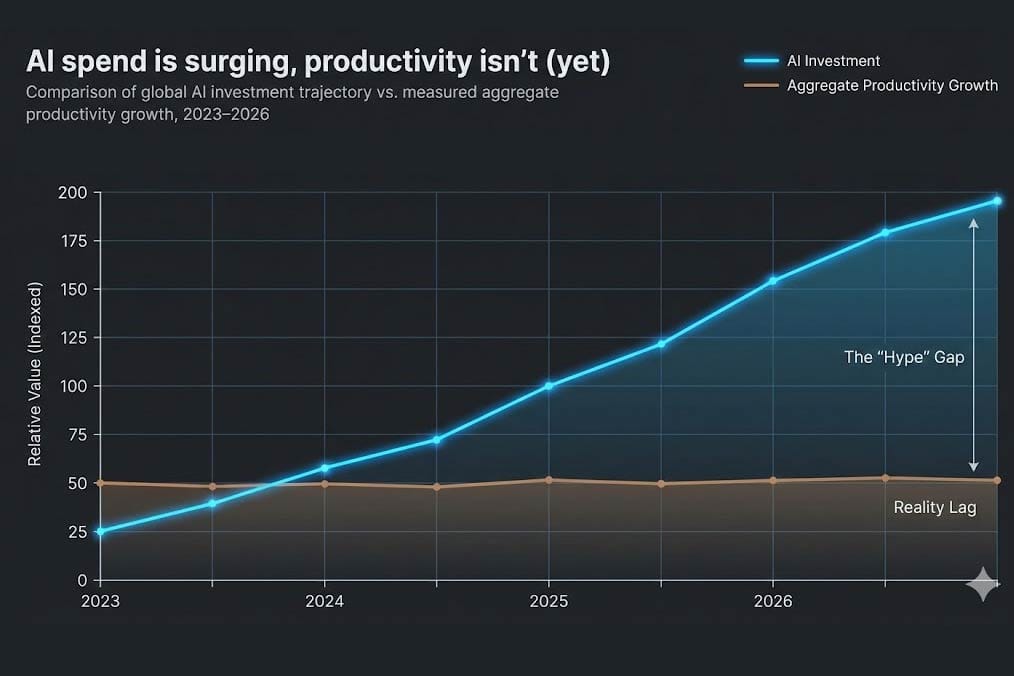

We are living through an AI paradox. Three years on and hundreds of billions of dollars into the AI boom, the impact on productivity is, according to Goldman Sachs, ‘near zero.’ Yet corporate and VC investment in AI has never been higher. ChatGPT was released in November 2022, and generative AI has become a buzzword. There has been a huge investment in this technology by investors funding AI software start-ups, and by corporates hoping to see the effect of this seemingly transformative technology. According to the OECD, 61% of all VC investment in 2025 was in AI, adding up to $259 billion. Around $35 billion of this was specifically on generative AI, with €109 billion spent in 2025 on companies doing AI infrastructure and hosting. Corporate AI investment reached $235 billion in 2024, according to a Stanford University report.

So, what has the economic impact been of this huge splurge in AI spending? According to a February 2026 Washington Post article, the chief economist of Goldman Sachs claimed that the impact has been “near zero”. A survey of 6,000 executives by the National Bureau of Economic Research found that 70% of firms now actively use AI, yet 80% of those firms report zero effect on either productivity or employment over the last three years. The same report found that executives hope that AI will have an impact in the next three years, looking for a 1.4% increase in productivity three years out. However, these are hopes rather than cold, hard numbers, showing a major discrepancy between projections and what has been achieved so far. Since most companies approve projects based on projected return on investment in under three years, it is likely that a great many of those same executives approved AI investment between 2023 and 2025 on the expectation of a return by now, yet for most companies, none has materialised.

This news is perhaps unsurprising given the various studies that have surveyed AI project success. AI projects are failing at an alarming rate, much higher than general IT projects. MIT reckoned the failure rate was 95%, as did Boston Consulting Group, while Gartner estimate 85% failure and Rand estimated 80%. Reasons for this remain somewhat speculative, but range from poor enterprise data quality to AI tools being used for the wrong kind of task to lack of AI literacy and difficulty in integrating with existing systems.

To be fair, productivity takes time to show up in official figures. Early investments in computing took years to show up in government statistics. But in the case of AI it is not just a lag. The studies above show that the actual investment projects are failing at an epic rate. Even with software coding, one of the poster children of AI use cases, the evidence is distinctly mixed. A 2025 study by Model Evaluation and Threat Research (METR) on experienced open-source developers found that using AI tools (like Claude and Cursor) made developers 19% slower, despite the developers incorrectly perceiving a 20% speed improvement in their productivity. For sure, this field is developing quickly, and the models are improving, but the picture even here is still at best nuanced. AI models can spew out new code at great speed, as well as helping with unit tests and documentation, but they are less capable when dealing with large existing code suites of the types that large corporations rely on for their production systems. Even things that generative AI seems good at can be problematic at a second glance. Large language models can summarise documents in a flash, but a 2025 study by the BBC and European Broadcasting Union found that 45% of AI summaries of news articles had significant errors. This is troubling when considering its use in more sensitive areas, such as medicine or law. The latest flavour of AI, agentic AI, is in an even less proven state. Anecdotes abound, such as the amusing experiment done by Anthropic of using its Claude model to run a tiny test business. Just this week, an AI Safety and Security researcher at Meta made headlines when she revealed that her AI agent, when asked to help her streamline the email messages in her inbox, promptly started to delete the lot despite explicitly being instructed not to. If someone working in AI at Meta struggles in this way it suggests that using agentic AI could end badly in less skilled hands. This is especially the case since agentic AI is notoriously weak at security. A February 2026 MIT study described agentic AI as a “security nightmare“.

To zoom back out to the broader economy, it is clear that some of the initial AI lustre is starting to fade. There remains a wide range of potential use cases, and there have certainly been some success stories. Individual projects or tasks may show significant productivity improvements, but this is simply not coming through at the macro level. By now, the sheer mass of investment made should surely have been achieving better results than we are seeing. This would be the case if the technology were really living up to the heroic expectations set by the AI industry boosters, of which there are many. The world of AI continues to develop apace, and there are no signs of investment slowing at this stage, but at some point, businesses are going to expect to see an actual monetary return on their considerable AI investments. The clock is ticking.