Corporations are investing a fortune in large language models (LLMs) like ChatGPT and Claude based on the promise that they can automate business processes, reduce costs and provide new insights. The reality has proved much trickier than the sales pitch, but just how reliable are these models at basic calculations and logic anyway?

A research paper from Apple tested a range of LLMs on a series of fairly basic benchmark tests taken from US grade school tests. These questions are aimed at children aged 5-11. The LLMs generally performed fine when asked the questions as stated, but their performance dropped off dramatically if distracting elements were added. The Apple research paper was mostly about proposing a reinforced abstraction-learning method designed to improve robustness by teaching models to construct abstractions and use symbolic tools. It would be easy to miss the point that LLMs are worryingly unreliable, and sensitive to irrelevant context, when it comes to some fairly basic logic tests.

Another 2025 research paper by Mirzadeh et al from Washington State University called “Understanding the limitations of mathematical reasoning in large language models”, ran a suite of 5,000 test questions through twenty LLMs. This showed that the performance of the LLMs dropped markedly when the phrasing of questions was varied, suggesting that LLMs are relying heavily on pattern matching from their training data rather than actual reasoning. For example, consider the question:

“Oliver picks 44 kiwis on Friday. Then he picks 58 kiwis on Saturday. On Sunday, he picks double the number of kiwis he did on Friday. How many kiwis does Oliver have?”

The correct answer is 44 + 58 + 88 = 190

But what if the LLMs are instead asked this variation on the question:

“Oliver picks 44 kiwis on Friday. Then he picks 58 kiwis on Saturday. On Sunday, he picks double the number of kiwis he did on Friday, but five of them were a bit smaller than average. How many kiwis does Oliver have?”

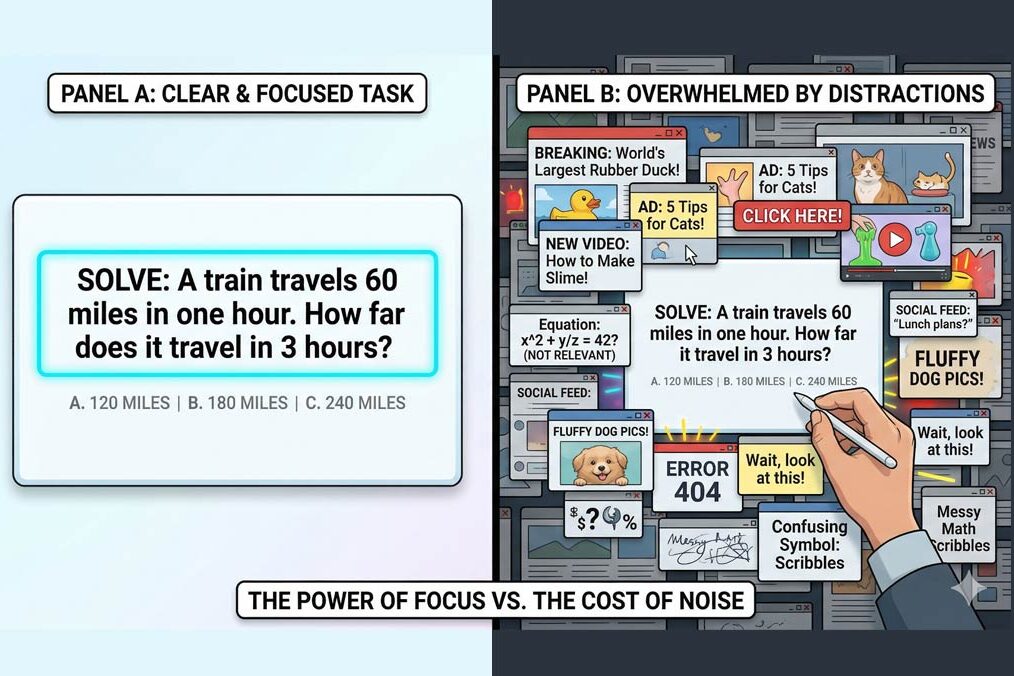

The answer should of course be the same: no one cares about whether some of the kiwis are smaller than average, and a human would ignore this distraction. Not so the LLMs, who often confidently but wrongly subtracted 5 from the answer, giving 185 kiwis. A single relevant-looking distracting clause can cause performance drops of up to 65%.

The more distracting phrases were added, the worse the performance was. So, in some cases, models incorrectly incorporate irrelevant numeric-sounding phrases into the computation.

Now this paper was published in June 2025, and it is fair to wonder whether the latest models are smarter. So, I performed a little experiment in April 2026, trying a few LLMs, specifically ChatGPT, Claude, Grok, Gemini and Mistral. I asked each the following question.

“Alice has three brothers. Each brother has two sisters. How many sisters does Alice have? “

If you think about this for a moment you will see that Alice has one sister. If each brother has two sisters, those sisters are Alice and one other girl. So, how did the LLMs get on?

ChatGPT initially reckoned Alice had two sisters but corrected itself when I challenged it: “are you sure about that?”. Mistral got it right in one go. Grok claimed two sisters. When challenged, it corrected itself. Claude got it right first time. Gemini was also right the first time and did not change its answer when challenged.

Curiously, when I tried ChatGPT later the same day, it got it right the first time (unlike earlier), but when I challenged it, the LLM changed its answer to three sisters. This is a reminder that LLMs are probabilistic in nature. Unlike deterministic systems like Excel, which give you the same answer every time to a calculation, LLMs are prediction engines, and vary in their answers. The degree of variation is influenced by the “temperature” setting of the LLM, which is usually not within user control. LLMs are trained as next-token predictors, not symbolic reasoning algorithms. Their outputs are sensitive to token distributions, which explains why irrelevant phrases can shift reasoning trajectories.

It turns out that there are a whole series of issues that can confuse LLMs. We have seen that adding distracting phrases causes degradation. So can phrasing the same question in different but structurally equivalent ways. Here is another one that I tried in April 2026:

“If Alice has 3 apples and gives 1 away, how many remain?”

And then the variant:

“If Alice has 3 apples and gives 1 away, and Bob likes oranges, how many remain?”

Obviously, there are two apples remaining. Any human can see this, and so did ChatGPT, Gemini, Claude and Mistral, but Grok thought that just one apple remained.

These little tests that I ran confirm that even in April 2026 some leading language models are distinctly fragile when it comes to quite basic logic, and can be susceptible to minor distractions in the phrasing of questions, just as the research papers above showed in 2025. A quite separate 2026 Stanford University paper explored the fragility of LLM answers where a question was slightly reworded. In April 2026 Microsoft updated its terms and conditions for its Copilot product (a wrapper on top of Chat-GPT), saying that: “Copilot is for entertainment purposes only. It can make mistakes, and it may not work as intended. Don’t rely on Copilot for important advice. Use Copilot at your own risk.”

What troubles me is this. How many business executives are aware of these limitations when they implement LLMs within their enterprise systems? How carefully are the answers of LLMs being checked within corporations? Enterprises would be wise to establish processes to check LLM outputs, and validate them carefully. This is especially true in any critical applications or where accuracy and consistency is important. LLMs should not be trusted blindly on tasks requiring exact arithmetic, consistent logic, or repeatable outputs. High-value workflows need validation, fallback rules, and human review where errors matter. LLM answers are probabilistic suggestions, not authoritative answers.