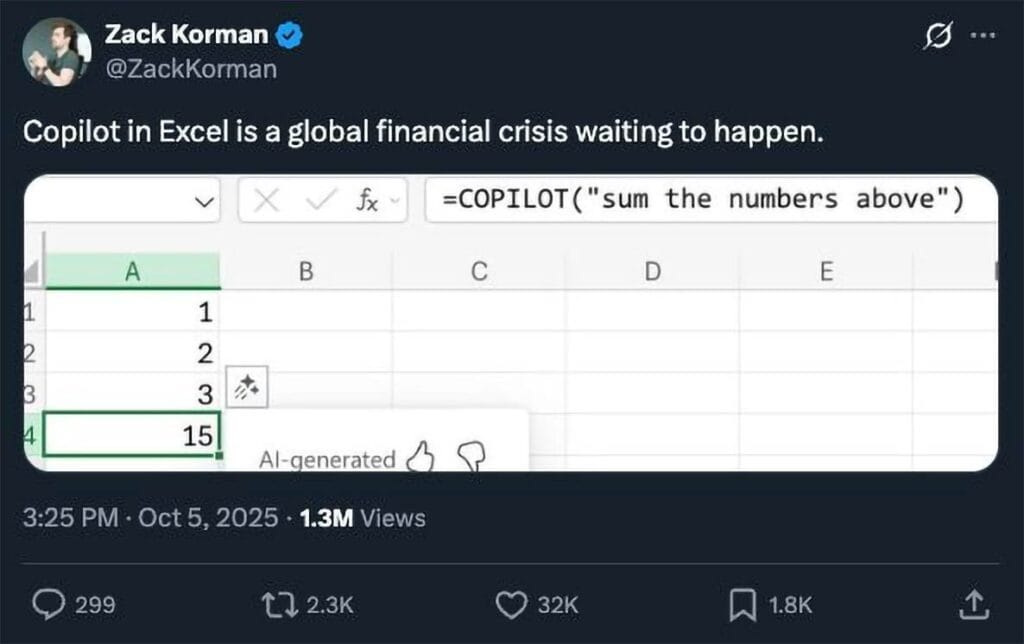

I saw a disturbing image today. Not something from a war zone or a horror film, but a post on Linkedin:

Microsoft have, in their wisdom, introduced a large language model (LLM)-driven AI helper to Excel called Copilot. After entering “=copilot( )”, you can type in any text you like within the brackets, and Excel will try and do what it thinks best in response to your prompt. However, as you can see from the above screenshot, this is not reliable. Why would it be? It is an LLM, and is inherently probabilistic in nature. Microsoft themselves say that use should not use Copilot for “numerical calculations, financial reporting, legal documents, or any scenario where accuracy and reproducibility are essential.” Doesn’t that sound like just about anything that you would actually want to do in Excel?

Doubtless, it can be argued that Copilot could be useful. Copilot can help organise the import of data, maybe classifying survey data or creating pivot tables (which some users find tricky) for exploratory data analysis. Perhaps it could be used to spot data outliers, or do some data formatting. But do you know what it should not be used for? Any calculation whatsoever. There is no world in which, when I set up a calculation in Excel, I want an answer that depends on what mood an LLM is in that day, depending on its internal temperature setting (the parameter controlling how random the model’s output is). It is hard to imagine a world in which the answer to a spreadsheet calculation should vary from time to time. This unreliability is not an inconvenience; it is a compliance nightmare waiting to happen.

Spreadsheets have the advantage of being explainable and auditable, important for most businesses but especially so for any business in a heavily regulated industry like banking. The use of Copilot within Excel opens up a Pandora’s box of regulatory risk, as it can no longer be certain that the calculations are consistent. Copilot automates access to swathes of enterprise information, potentially opening up inappropriate user access to confidential data. The known tendency of LLMs to regularly hallucinate plausible but incorrect formulas or summaries exposes risks of regulatory non-compliance.

There are other concerns, in particular security. The Copilot tool necessarily has access to data records, and yet corporates are notoriously lax when it comes to carefully restricting sharing permissions. One study of 550 million data records found that 83% of the records were unnecessarily shared within the company, and 17% were shared with 3rd parties. That is an issue in itself, but the risks when LLMs are involved is multiplied, as LLMs have various security weaknesses over and above regular applications. In particular, they are vulnerable to prompt injection. This can lead to sensitive data being exfiltrated just by means of cleverly worded prompts that skirt around the notional guardrails built into LLMs during their alignment training. The technique can be used to access data that should not be accessed or introduce malware. Although measures can be taken to try to defend against this, these measures are not guaranteed to be effective, and also need to be actively put in place. How many companies do you think have done this? The track record on this is not encouraging. A Boston Consulting Group survey found that 72% of companies are not ready, by their own admission, for AI regulation, and a separate study found that just 1.6% of companies have integrated AI into their compliance policies.

It seems to me that the risks of using LLM-style helpers within tools like Excel significantly exceed the potential benefits. Fortunately, a disaster can be averted simply by switching the Copilot feature off. Just go:

File, Options, Copilot

Untick the checkbox and click OK and restart Excel.

Think of it as an exorcism, but with less holy water.