Although Large Language Models (LLMs) like ChatGPT are very much in vogue, there are many other flavours of AI. Some are mostly historical, like expert systems, but others are very relevant to the present. Machine learning, for example, is the technology behind most of the predictive maintenance use cases in industry that you will read about, while deep learning and structural biology is the technology behind Google DeepMind’s AlphaFold, which won its founder Demis Hassabis (and DeepMind co-founder John Jumper) the Nobel Prize for Chemistry in 2024. There are other types of AI too, one of which is emerging as an important one for the future: energy-based models.

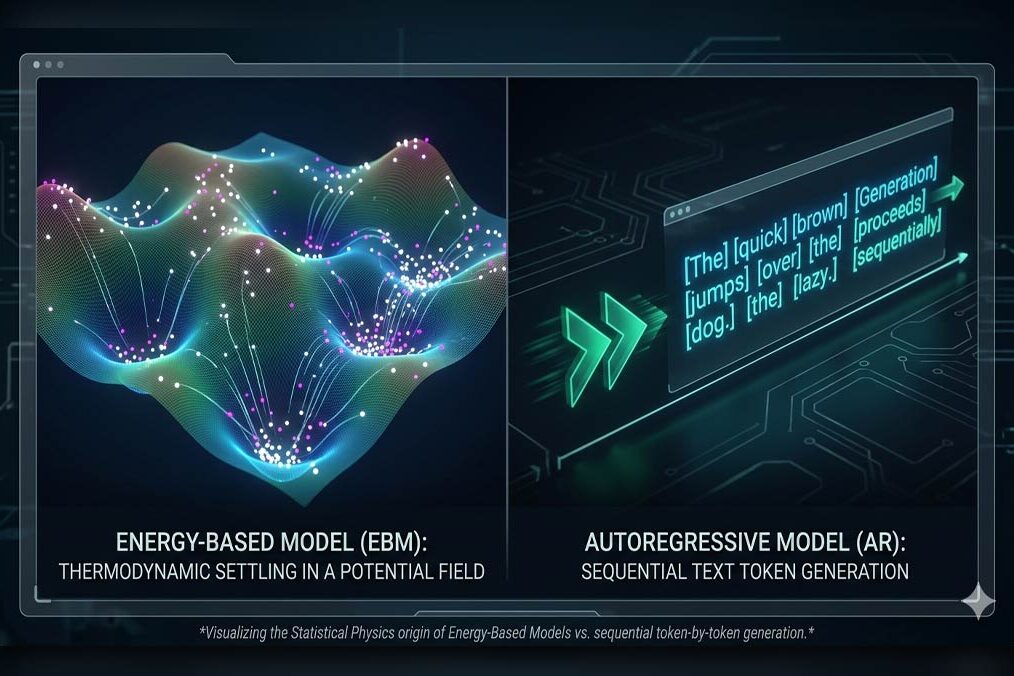

Energy based models (EBMs) are a fundamentally different approach to AI than large language models (LLMs). LLMs are trained on vast amounts of text (or image) data and work out how basic units of text (tokens) are related to one another. They then store these relationships in the form of mathematical weights, and use these to generate new text, one token at a time, in response to input prompts. An LLM says “given what you have seen until now, what comes next?”. The reasoning process of an LLM is opaque (dozens of layered neural networks) and so is a black box: you cannot determine why an LLM came up with a certain answer, you can only evaluate its output.

EBMs are inspired by statistical physics, where different states of matter have different energy levels (scores). EBMs assign an energy score to candidate outputs and use iterative search to find low-energy, globally consistent solutions.

A low energy state is good, such as a coherent sentence or a realistic image. High energy states, such as nonsensical text or random pixels, are bad. An EBM essentially asks: “Which full output is best globally?”. You can think about it as a kind of optimiser. LLMs generate outputs quickly by predicting the next token, while EBMs search more slowly for globally consistent solutions.

EBMs, unlike LLMs, can expose their internal reasoning, though not in the form of human-readable reasoning. EBMs assign an energy score to candidate outputs and use iterative search to find low-energy, globally consistent solutions. They have flexible constraints and are very general in nature. In principle, EBM could do everything an LLM can, at least in theory, though they are harder to train and less operationally convenient than LLMs. EBMs can do language translation, dialogue, coding etc, just as an LLM can.

EBMs are not new, but have not taken off in the way that LLMs have recently. EBMs are elegant in many ways. They require less training data than LLMs, though they need trickier sampling of data in training, meaning training costs in terms of compute can still be very high. Another key difference between LLMs and EBMs is when you consider hallucinations. An LLM will fabricate plausible but incorrect answers, especially if it has gaps in its training data. An EBM judges and optimises its outputs, searching for low energy states. They can make mistakes through incorrect scoring, but these mistakes can be corrected. They are less prone to unconstrained fabrication because outputs are judged against a score, though they can still fail if the scoring model is wrong. You can apply constraints to an EBM, making it more suitable than LLMs for tasks where consistency is important, such as robotics, engineering or safety critical systems.

A primary way to train an EBM is through “denoising score matching”. The model explicitly learns to take corrupted, noisy data and to map it “downhill” into a clean, low-energy state, as discussed in this research paper. By applying this at multiple noise scales, EBMs can restore degraded images or synthesize entirely new high-quality images from pure noise. This mathematical foundation is closely related (via score matching) to the modern diffusion models like Midjourney, DALL-E, and Stable Diffusion that dominate AI image generation today.

Another application is in healthcare, where taking a full Magnetic Resonance Imaging (MRI) scan can be very slow. EBMs can be used to reconstruct high-quality MRIs from sparse, fast, “under-sampled” data. The EBM learns the “prior” energy distribution of normal human anatomy, which allows it to fill in missing visual information and suppress measurement noise without needing overly rigid network architectures.

Because an EBM is explicitly designed to model the underlying distribution of “normal” data, it makes a powerful anomaly detector. In fields like cybersecurity, industrial defect monitoring, or medical diagnosis, normal data naturally falls into the model’s low-energy valleys. If a new file or data point is fed into the system and returns an unusually high energy score, the AI immediately flags it as a severe anomaly. In power grid management, grid operators must deal with the unpredictable nature of renewable energies like solar and wind. Advanced generative models based on denoising processes can be used to model time series data for power generation, allowing the simulation of realistic load scenarios to keep the grid balanced.

There is a price to pay for this approach, however, and that price is the efficiency of inference. Once trained, an LLM is fast to produce an answer. An EBM is iterative and costlier in terms of run time inference. An EBM is searching for an answer whereas an LLM directly computes it from its weights. Moreover, some hardware, like the GPUs of Nvidia, is optimised for matrix multiplication, which is at the heart of how LLMs work. This means that LLMs are essentially more efficient than EBMs and can scale more easily. On the other hand, LLMs hallucinate, making them ill-suited to tasks where consistency and accuracy is important.

The two styles of models are in a sense complementary. For example, an LLM can quickly produce a range of possible text answers or coding solutions. An EBM can evaluate and score these, acting as a verification layer on top of LLMs. This can be used for fact checking, code correctness scoring, or physical plausibility in the case of robotics. Reward models, verifiers and scoring models are related conceptually to EBMs or at least something kindred to EBMs. These are used today by some AI foundation labs to try and improve on raw LLM output. This can be seen in the reasoning chains used in more recent LLM models.

Some leading researchers like Yann LeCun, one of the three “godfathers of AI” (along with Geoffrey Hinton and Yoshua Bengio) and now founder of Advanced Machine Intelligence Labs, are advocates of EBMs. There are also start-ups building EBMs, like Logical Intelligence in San Francisco, and open-source efforts like TorchEBM and Learnergy, which are developer tools. It is early days for EBMs in terms of production products, but anything that promises to largely eliminate hallucinations is surely a technology that should be encouraged. There is a high rate of hallucinations in LLM. Perhaps one in five LLM answers are fabricated, with recent models showing even higher rates. This makes LLMs very challenging to implement for a wide range of applications in industry, where even a 1% error rate is unacceptably high. No one wants a bank transfer system that sends 20% of payments to random bank accounts, or 20% of deliveries to random addresses, let alone situations in safety critical systems. EBMs at least have the potential to radically reduce hallucination rates, and so are a technology to watch. EBMs are still early-stage, but they are worth following because they offer explicit scoring, constraint handling, and a natural fit for verification tasks. They are unlikely to replace LLMs, but they may complement them in settings where consistency, physical plausibility, or anomaly detection matters more than fluent generation of text.